To Build a Better Game

For 30-odd years I have been developing computer games. Over that period of time, I have seen many changes, both in technology as well as in general game design.

As I look back, however, I find that things are not always just moving forward, as we blindly assume. It pays to look back on occasion, to learn from the lessons of the past. Many of the challenges game developers face today are not altogether new, and close examination may reveal that vintage methodologies may still do the job.

Let’s take the subject of load times, for example, one of my pet peeves with many modern computer games.

On my Commodore VIC-20, the first computer I owned, programs were loaded using a datasette, a tape cassette recorder that was slower than a slug crossing a puddle of ice. What was worse was that datasettes were prone to loading errors, which meant that there was always the possibility that the last 10-minutes worth of loading were wasted because the program had not loaded correctly and you’d have to redo it all over again—after adjusting the tape head with a screwdriver. (Strange that we all look back so fondly on those days, as we reminisce about the good old days, isn’t it?)

On my Commodore VIC-20, the first computer I owned, programs were loaded using a datasette, a tape cassette recorder that was slower than a slug crossing a puddle of ice. What was worse was that datasettes were prone to loading errors, which meant that there was always the possibility that the last 10-minutes worth of loading were wasted because the program had not loaded correctly and you’d have to redo it all over again—after adjusting the tape head with a screwdriver. (Strange that we all look back so fondly on those days, as we reminisce about the good old days, isn’t it?)

The arrival of floppy disks improved things a bit, but as the data throughput became faster, the amount of data we needed to load grew exponentially along with it. In the end, loading times remained long and disk swaps became insufferably tedious.

The arrival of floppy disks improved things a bit, but as the data throughput became faster, the amount of data we needed to load grew exponentially along with it. In the end, loading times remained long and disk swaps became insufferably tedious.

Hard drives arrived and made things a whole lot easier, and faster, but alas, following Moore’s Law, once again, the hunger for data outgrew performance exponentially, despite the fact that drives became faster and faster. Today, even a solid state SSD-drive seems too slow to handle your everyday office software appropriately.

In today’s games, it is common that gigabytes of data have to be transferred from a storage medium into the computer. High-resolution textures, light maps, dirt maps, geometry, audio files and other data create a bottleneck that very quickly slows down the load times of any game.

When we first encountered this problem in the 80s, the most common approach to reducing load times was to encode data. “Know your data” was a key mantra at the time. If you have set of data and you know it’s entirely binary, why waste 8-bits on it when one will do? In this fashion, data could oftentimes be reduced to a fraction of their original size, thus reducing load times.

As processors became more powerful, the next step was usually compression. Here, more intelligent and powerful algorithms were put to use that were able to compress all sorts of data without any loss of information, especially when datasets were optimized by packing together clusters of the same type. Text files compress very differently, for example, than image files, and compressing each type separately would yield much better results.

As processors became more powerful, the next step was usually compression. Here, more intelligent and powerful algorithms were put to use that were able to compress all sorts of data without any loss of information, especially when datasets were optimized by packing together clusters of the same type. Text files compress very differently, for example, than image files, and compressing each type separately would yield much better results.

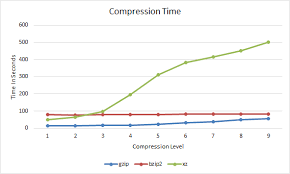

But decompression takes time and space, so programmers are always trying to carefully evaluate the trade-offs. When decompressing data becomes more expensive and slower than loading it, it loses its purpose—unless obfuscation is your goal.

We reached that point around the time that large hard drives became commonplace and storage space became seemingly of no real consequence. Not surprisingly, it went hand in hand with the emergence of object-oriented programming. Almost all game developers moved from C to C++ at the time, as the language of choice. As a consequence of encapsulation, and private object implementations, the mantra “know your data” became frowned upon. You were not supposed to know how a method implements its workings or what its internal data looks like. Just assume it works!

When it came to loading and storing data for these objects, programmers would oftentimes simply serialize the object and dump it straight onto the hard drive. Simple, fast, not much can go wrong.

![]() As games grew in size, the widespread use of game engines like Unity, Gamebryo or Unreal and others further compounded to the problem. No longer interested in internal workings, developers would use the built-in functionality without giving data storage much thought.

As games grew in size, the widespread use of game engines like Unity, Gamebryo or Unreal and others further compounded to the problem. No longer interested in internal workings, developers would use the built-in functionality without giving data storage much thought.

As a result, load times for games increased again, dramatically. Some developers tried to counter with compression, but as I mentioned before, better compression comes at a cost and as you sit there and stare at the loading bar on your screen, you’re not actually experiencing disk transfer times, but rather decompression taking the limelight.

To me, it is simply unacceptable that I have to wait for 2 or more minutes to get back into the game after my character dies, as is the case in many games these days.

So how can we solve this problem and rein in load times? A multi-pronged approach is definitely necessary.

The first and most important thing we need is goodwill. As developers, we have to WANT to bring load times down. Only then will we spend the necessary time to analyze the issue and try to find solutions for it. Since every game is unique, optimizing load times is a custom process, just like optimizing a rendering pipeline is. But first, we have to see it as a problem that requires fixing before we can do something about it.

In the case of a save game re-load, as described above, a number of methods instantly come to mind, and instantly the vintage mantra “know your data” becomes relevant again. If you don’t know what you’re dealing with, you simply cannot optimize it.

Assume, if you wish, that you load a game, stand there, and one second later, without ever moving, your character dies from the effects of a poison. What happened? Nothing!

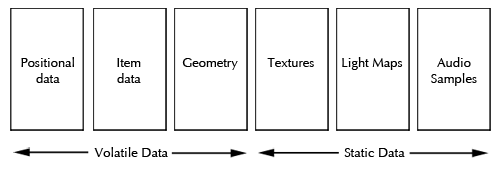

At least nothing that should warrant a 2-minute data load in an age where disk data throughput is in the hundreds of megabytes per second. We did not destroy any geometry. Did nothing that affected any of the textures or light maps. No items were touched. We did not move around either, requiring no additional geometry or texture pre-caching. No new opponents were spawned. Everything that happened was limited to a small subset of data, such as opponents moving around, perhaps, the character’s stats themselves, perhaps some raindrops falling—stuff like that.

With a bit of effort on the developer’s side, all of that could be reloaded or even reset within a fraction of a second. With the right technology and methodologies in place. So, why not develop a core system that flags data as dirty when it has been modified? Why not develop loaders that are intelligent enough to reload only the data that need to be reloaded?

In practice, it would require grouping various types of data together—hence the return of the “know your data” mantra. If you keep all positional information in a storage pool together, you can elect to load only these positional data. If you group geometry together, you can selectively load only geometry, if it has been affected. If the player has not even moved and there is no need to reload gigabytes worth of textures, why do it? I suppose you get the idea.

In practice, it would require grouping various types of data together—hence the return of the “know your data” mantra. If you keep all positional information in a storage pool together, you can elect to load only these positional data. If you group geometry together, you can selectively load only geometry, if it has been affected. If the player has not even moved and there is no need to reload gigabytes worth of textures, why do it? I suppose you get the idea.

The key is to identify what has changed and to find ways that allow you to access and reload only those portions of data that are necessary. Your reload times will very quickly go through the roof in many instances.

The same is true for the basic game load when you start it up for the first time. If data is properly organized, it can be treated in a way that is specific to the dataset. Instead of compressing certain types of data, they could be encoded instead, since decoding is typically faster by a significant factor. Other types of data may lend themselves to compression using one type of algorithm, whereas others would benefit from a different type, which may decompress much faster. The key is to take the time to explore, analyze and evaluate your data and your needs and then build an optimized strategy around it.

Is this a trivial task? No, by no means. It requires very careful analysis and structuring of data layouts, as well as the implementation of logic that flags, identifies, isolates and manages data loads, but it is important work. Loaders may become quite a bit more complex but it is a fair, one-time price to pay for an improved user experience. Even a very basic system using different layers of data and loading them selectively will result in shorter load times right off the bat.

To put it in perspective for you, if you can cut down the loading time of your game by one minute for a million game sessions, you have effectively freed up almost two years worth of time. Your players will thank you for it!